在base64编码之前缩短字符串以使其更短的无损压缩方法?

时间:2023-09-30问题描述

刚刚构建了一个用于预览 HTML 文档的小型 Web 应用程序,该应用程序生成的 URL:s 包含 base64 编码数据中的 HTML(以及所有内联 CSS 和 Javascript).问题是,URL:s 很快就会变得有点长.首先压缩字符串而不丢失数据的事实上的"标准方式(最好是Javascript)是什么?

just built a small webapp for previewing HTML-documents that generates URL:s containing the HTML (and all inline CSS and Javascript) in base64 encoded data. Problem is, the URL:s quickly get kinda long. What is the "de facto" standard way (preferably by Javascript) to compress the string first without data loss?

PS;前段时间我在学校读到关于 Huffman 和 Lempel-Ziv 的文章,我记得我真的很喜欢 LZW :)

PS; I read about Huffman and Lempel-Ziv in school some time ago, and I remember really enjoying LZW :)

找到解决方案;似乎 rawStr => utf8Str => lzwStr => base64Str 是要走的路.我正在进一步致力于在 utf8 和 lzw 之间实现霍夫曼压缩.到目前为止的问题是,当编码为 base64 时,太多的字符会变得很长.

Solution found; seems like rawStr => utf8Str => lzwStr => base64Str is the way to go. I'm further working on implementing huffman compression between utf8 and lzw. Problem so far is that too many chars become very long when encoded to base64.

推荐答案

查看这个答案.它提到了 LZW 压缩/解压缩的功能(通过 http://jsolait.net/,特别是 http://jsolait.net/browser/trunk/jsolait/lib/codecs.js).

Check out this answer. It mentions functions for LZW compression/decompression (via http://jsolait.net/, specifically http://jsolait.net/browser/trunk/jsolait/lib/codecs.js).

这篇关于在base64编码之前缩短字符串以使其更短的无损压缩方法?的文章就介绍到这了,希望我们推荐的答案对大家有所帮助,也希望大家多多支持跟版网!

相关文章

- 使用 <a href="data; 是否安全?...">显示图像

- 为什么在 crossOrigin = 'Anonymous' 图像上设置 base64 数据时,Safa

- 使用javascript获取Base64 PNG的像素颜色?

- 在 JavaScript 中下载 PDF Blob 时出现问题

- Javascript - 从 base64 图像获取扩展名

- 如何在 JavaScript 中缩放图像(数据 URI 格式)(实际缩放,不使用样式)

- 在 Chrome 中打开 blob objectURL

- 使用 localStorage 进行 javascript 字符串压缩

- 如何解码 base64 编码的字体信息?

- 将 dataURL(base64) 保存到 PhoneGap (android) 上的文件

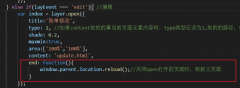

layer.open打开的页面关闭时,父页面刷新的方法layer.open打开的页面关闭时,父页面刷新的方法,在layer.open中添加: end: function(){ window.parent.location.reload();//关闭open打开的页面时,刷新父页面 }

layer.open打开的页面关闭时,父页面刷新的方法layer.open打开的页面关闭时,父页面刷新的方法,在layer.open中添加: end: function(){ window.parent.location.reload();//关闭open打开的页面时,刷新父页面 }